Data-Driven Energy Analysis

How the world's energy systems actually work

Analysis of power grids, data center energy, and renewable infrastructure. No spin, just data.

View latest analysis

Data-Driven Energy Analysis

Analysis of power grids, data center energy, and renewable infrastructure. No spin, just data.

View latest analysis

Data centers in the United States consumed approximately 176 terawatt-hours of electricity in 2023, roughly 4.4% of total national electricity consumption. To put that in perspective, that is enough electricity to power about 16 million American homes for an entire year. Globally, data centers consumed around 415 terawatt-hours in 2024, representing about 1.5% of worldwide electricity use.

These numbers have been growing at roughly 12% per year over the past five years, and the rate of growth is accelerating. The International Energy Agency projects that global data center electricity consumption could reach between 650 and 1,050 terawatt-hours by 2026. In the United States, S&P Global forecasts that data center power demand will rise to 75.8 gigawatts in 2026 and could reach 134 gigawatts by 2030.

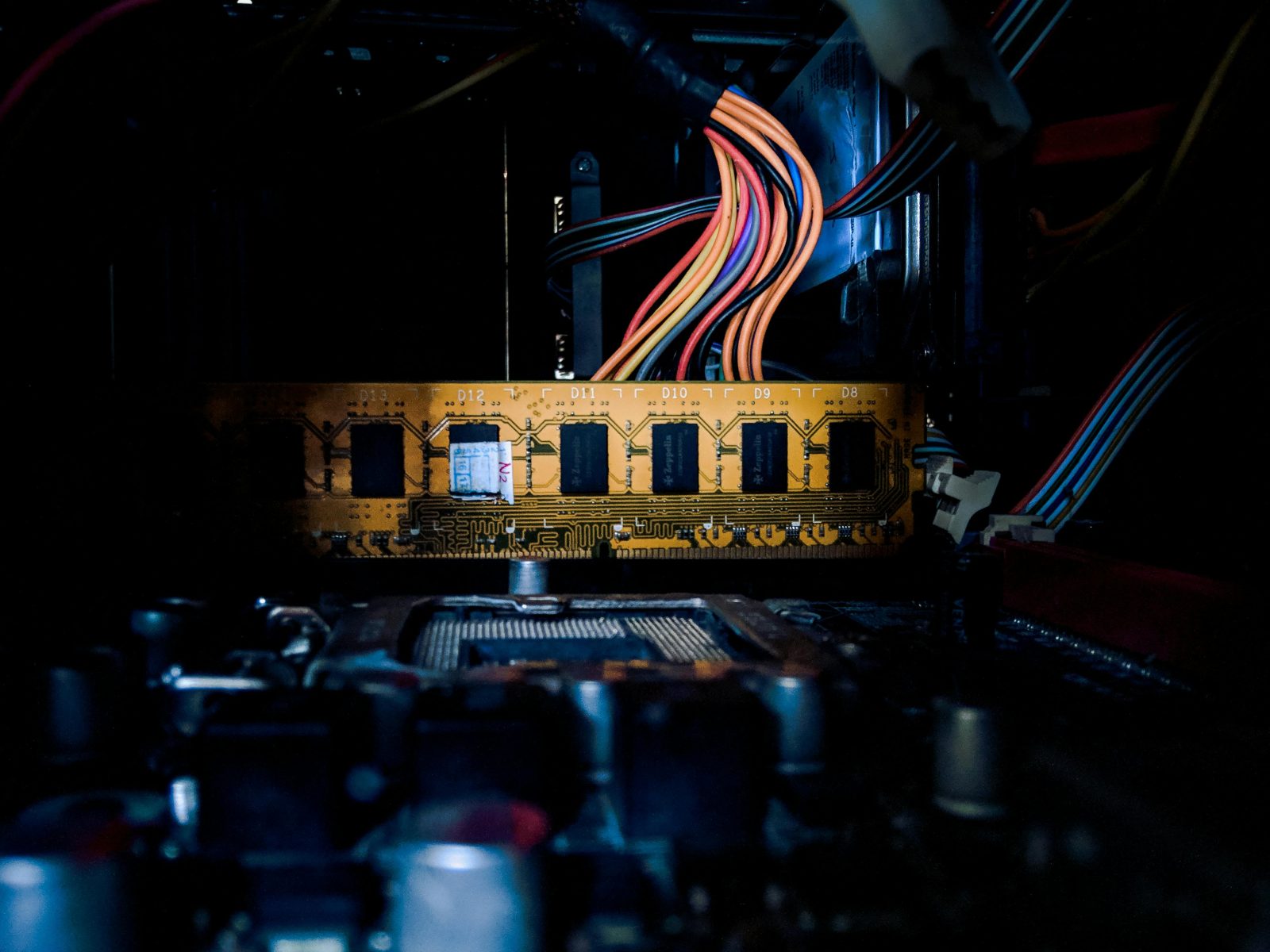

Inside a data center, electricity consumption breaks down into several categories. IT equipment, including servers, storage systems, and networking gear, accounts for 40% to 50% of total electricity use. Cooling systems consume another 30% to 40%, as all that computing hardware generates enormous amounts of heat that must be removed to prevent equipment failure. Power distribution infrastructure, including uninterruptible power supplies and transformers, uses another 10% to 15%.

The efficiency of a data center is measured by its Power Usage Effectiveness, or PUE. A PUE of 1.0 would mean every watt of electricity goes directly to computing, with no overhead for cooling or other systems. In practice, modern hyperscale data centers achieve PUE ratings between 1.1 and 1.3. Older enterprise data centers often have PUE ratings of 1.5 to 2.0 or higher.

Artificial intelligence is fundamentally changing the electricity equation for data centers. Traditional computing tasks are relatively light on power. A standard Google search query consumes about 0.3 watt-hours of electricity. The real power demand comes from AI model training, which requires thousands of specialized processors running continuously for weeks or months.

The shift toward AI-optimized hardware is driving a dramatic increase in power density within data centers. Nvidia’s latest GPU clusters can consume 70 kilowatts or more per rack, compared to 5 to 10 kilowatts for a traditional server rack. Facilities designed for AI workloads are now regularly planned at 100 megawatts to 1 gigawatt, each equivalent to the power needs of 80,000 to 800,000 homes.

Data center construction is concentrated in specific regions of the United States. Northern Virginia remains the global epicenter, with approximately 4 gigawatts of data center capacity and aggressive expansion underway. Texas, particularly the Dallas-Fort Worth area, is the second-largest market. Other major clusters include central Ohio around Columbus, the greater Atlanta metro area, and parts of Oregon and Arizona.

The geographic pattern is shifting. Developers are expanding beyond traditional hubs into areas with available power capacity, affordable land, and existing fiber-optic infrastructure. New projects are being planned across Pennsylvania, the Carolinas, Illinois, and along the Gulf Coast.

The scale of data center power demand is straining the electrical grid. The North American Electric Reliability Corporation has warned of elevated risk of electricity shortfalls in 2026 and beyond in regions experiencing rapid data center growth. Utilities are scrambling to build new generation capacity, but the development timelines do not match the urgency of data center operators.

In response, data center developers are increasingly exploring behind-the-meter power solutions, including on-site natural gas turbines, fuel cells, and even small modular nuclear reactors, to bypass grid constraints and secure the power they need on their own timelines.

Despite the dramatic growth in absolute power consumption, the data center industry has made substantial efficiency gains. The average PUE has improved from 2.5 in 2007 to about 1.55 in 2022. Advanced liquid cooling technologies can push PUE below 1.1.

The question facing the industry is whether efficiency gains can keep pace with demand growth. By 2030, data centers could account for 6% to 12% of total US electricity consumption, up from 4.4% today. Meeting this demand while maintaining grid reliability represents one of the most significant infrastructure challenges of the decade.

Data-driven energy intelligence covering:

AI & Data Centers · Solar · Wind · Nuclear · Energy Storage · Grid & Utilities · Electric Vehicles · Carbon & Climate

Learn moreKey Takeaway PUE measures how efficiently a data center uses energy. A...

ByAlexanderFebruary 17, 2026What Edge Computing Means Edge computing is an architectural approach that moves...

ByAlexanderFebruary 14, 2026Why Data Centers Use Water Water is one of the most efficient...

ByAlexanderFebruary 13, 2026Why Grid Connection Is the Bottleneck A data center is only as...

ByAlexanderFebruary 12, 2026

Leave a comment